ACM MM 2025 · BNI Track · Oral

CONCIL: Continual Learning for Multimodal Concept Bottleneck Models

Official project page

TL;DR

CONCIL formulates concept/class incremental updates in CBMs as recursive analytic solutions, reducing forgetting while remaining efficient for continual multimodal learning.

Abstract

Concept Bottleneck Models (CBMs) enhance the interpretability of AI systems by bridging visual input with human-understandable concepts. However, existing CBMs typically assume static datasets, which limits their adaptability to real-world, continuously evolving multimodal data streams. We define a novel continual learning task for CBMs: concept-incremental and class-incremental learning (CICIL). This task requires models to continuously acquire new concepts and classes while robustly preserving previously learned knowledge. We propose CONCIL (Conceptual Continual Incremental Learning), a framework that reformulates concept and decision layer updates as linear regression problems, eliminating gradient-based optimization and effectively preventing catastrophic forgetting. CONCIL relies solely on recursive matrix operations, rendering it computationally efficient and suitable for real-time and large-scale multimodal applications. We provide a theoretical proof of “absolute knowledge memory” and demonstrate that CONCIL significantly outperforms traditional CBM methods in both concept- and class-incremental settings.

Task: CICIL

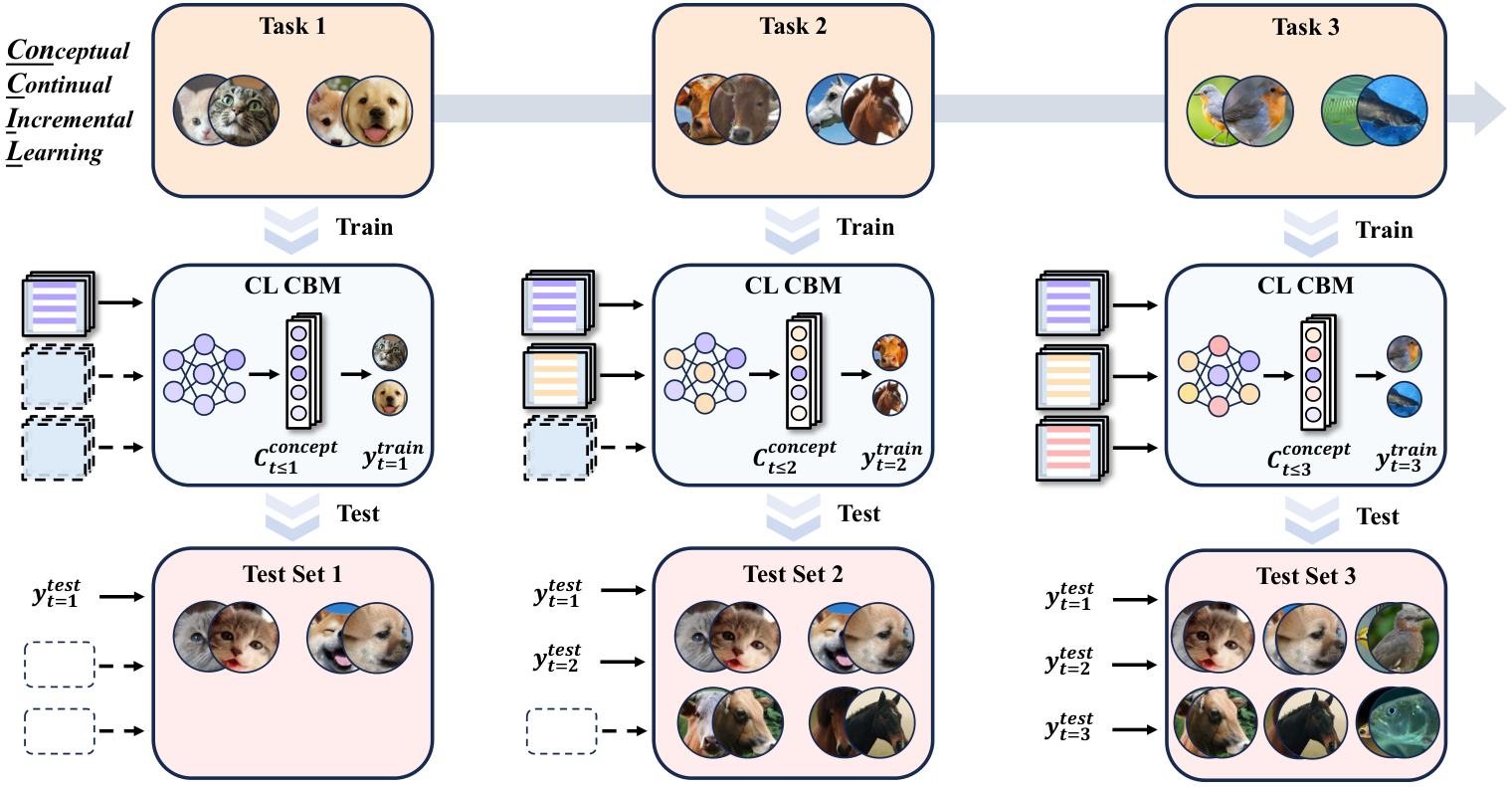

The CICIL task: sequential phases with growing concept and class sets. Each task provides training/test data with inputs x, concept vectors c, and labels y.

Figure 1: Concept-Incremental and Class-Incremental Continual Learning (CICIL) for CBMs.

Method: CONCIL

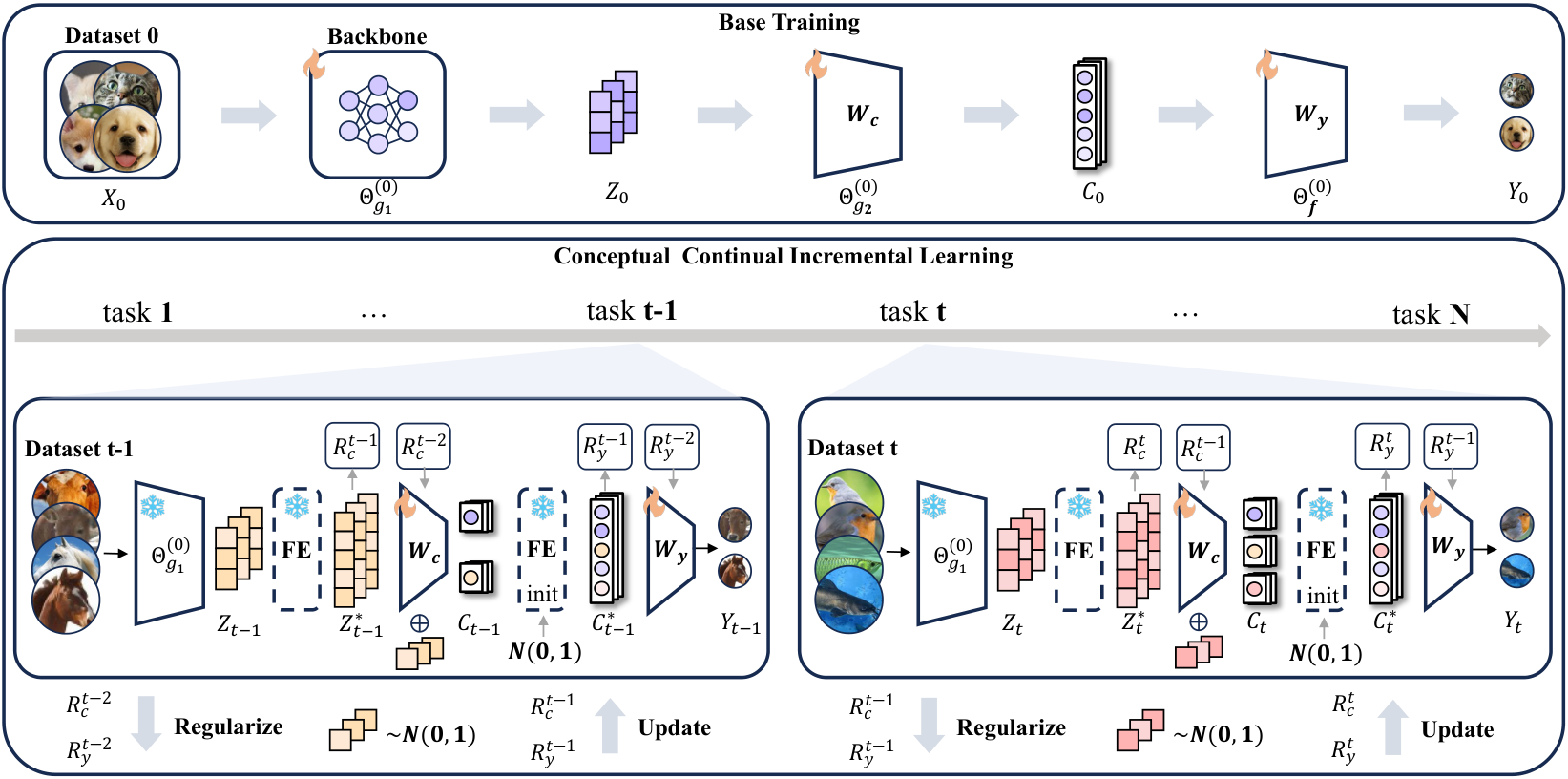

Base training (Task 0) jointly trains backbone, concept layer, and classifier; the backbone is then frozen. Incremental tasks use recursive analytic updates for the concept layer and classifier, with expanding concept and class dimensions.

Figure 2: CONCIL framework overview.

Results

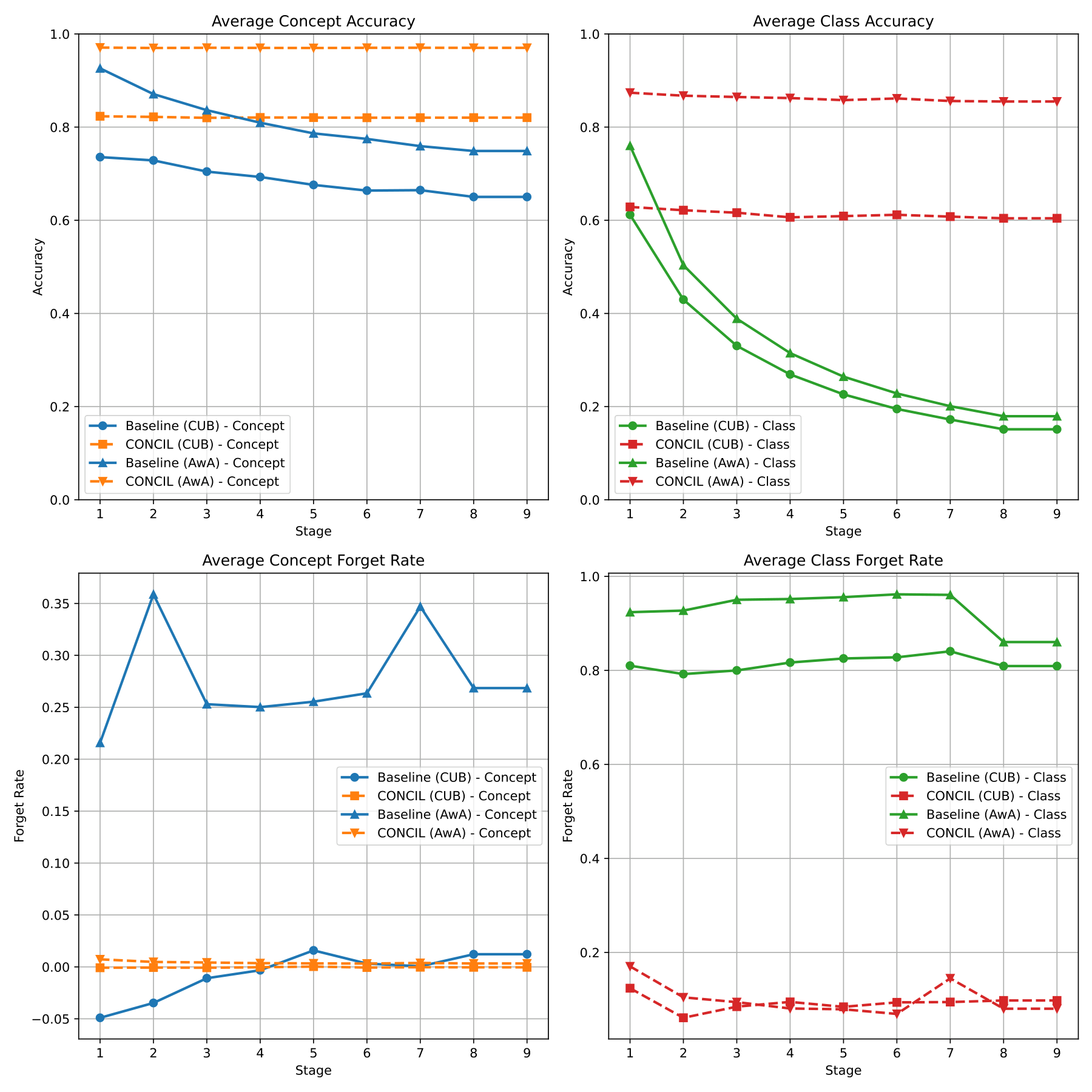

CONCIL vs. baseline: average concept/class accuracy and forgetting rates across phases on CUB and AwA.

Main result figure: CONCIL achieves higher accuracy and lower forgetting rates than the baseline.

Code & Repo

- GitHub (code, data prep, training scripts): github.com/xll0328/CONCIL—ACM-MM-2025-BNI-Track-

- Paper (PDF): arXiv:2411.17471

Citation

@inproceedings{lai2025learning,

title={Learning New Concepts, Remembering the Old: Continual Learning for Multimodal Concept Bottleneck Models},

author={Lai, Songning and Liao, Mingqian and Hu, Zhangyi and Yang, Jiayu and Chen, Wenshuo and Xiao, Hongru and Tang, Jianheng and Liao, Haicheng and Yue, Yutao},

booktitle={Proceedings of the ACM International Conference on Multimedia (ACM MM)},

year={2025}

}